By Divyanka Salona, Principal Business Analyst, REI Systems, and Victor Carlstrom, CX Lead, REI Systems

Most customer experience problems do not begin at launch. They begin much earlier, when teams define what a system is supposed to do, whose needs matter most, and which assumptions go untested. In federal programs, early work shapes workflows, constraints, business rules, and decision points that users will live with for years.

That is why requirements gathering is a critical part of the process. When a flawed assumption surfaces during user acceptance testing or after deployment, it is usually treated as a bug. More often, it is the downstream result of a requirement that was never validated closely enough in the first place.

Why Requirements Gathering Matters More Than Ever

Requirements engineering has always been foundational, but it matters even more in today’s modernization environment. Agencies are operating across aging systems, shifting policies, fragmented data, tighter funding scrutiny, and rising expectations for digital service quality. In that setting, weak requirements do not simply create delivery inefficiency; they directly shape whether users can complete tasks with confidence.

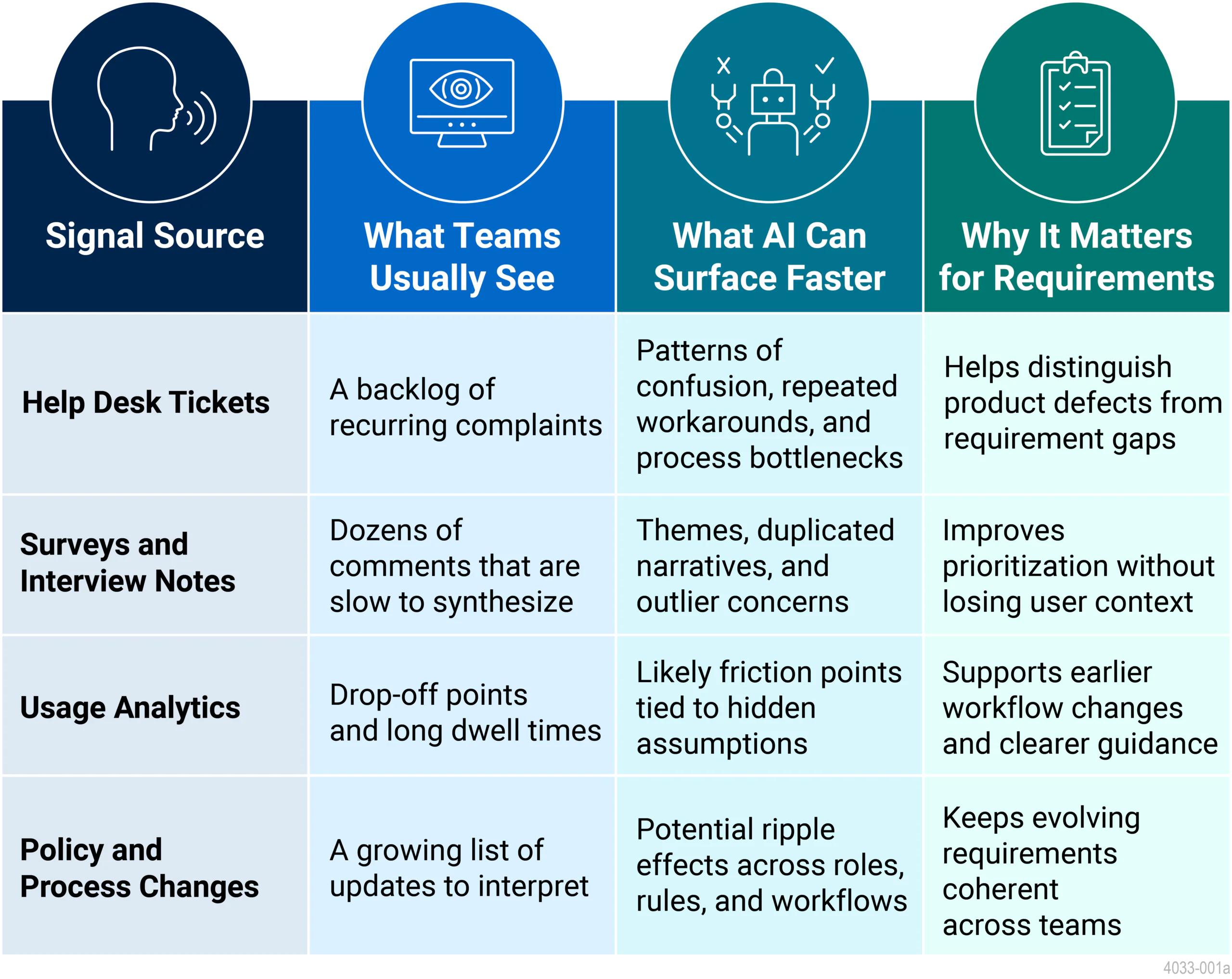

The discipline itself has evolved for the same reason. Workshops, interviews, and documentation reviews are still necessary, but they are no longer enough on their own. Teams now have access to far more evidence, including help-desk tickets, service records, survey feedback, workflow analytics, and policy updates. The challenge is not a lack of information. It is making sense of that information fast enough to improve decisions while the requirements are still forming.

Where Traditional Processes Break Down

A recurring problem is the gap between stakeholders and everyday users. Stakeholders bring mission priorities, compliance boundaries, and budget realities. Users bring the lived reality of how work gets done under pressure. Strong requirements practices need both perspectives. When one outweighs the other, the system may satisfy formal needs while still creating friction in real work.

Scale creates a second challenge. Even when teams know they should examine support data or qualitative feedback, manual review is time-consuming and inconsistent. Important patterns are easy to miss, especially when the signals are spread across dozens or hundreds of records.

A third challenge is change. Requirements rarely remain static in federal delivery. Policies evolve, business rules shift, and integrations create ripple effects across teams. Without a way to rapidly assess downstream implications, requirements become brittle and change management becomes reactive.

Where AI Adds the Most Value

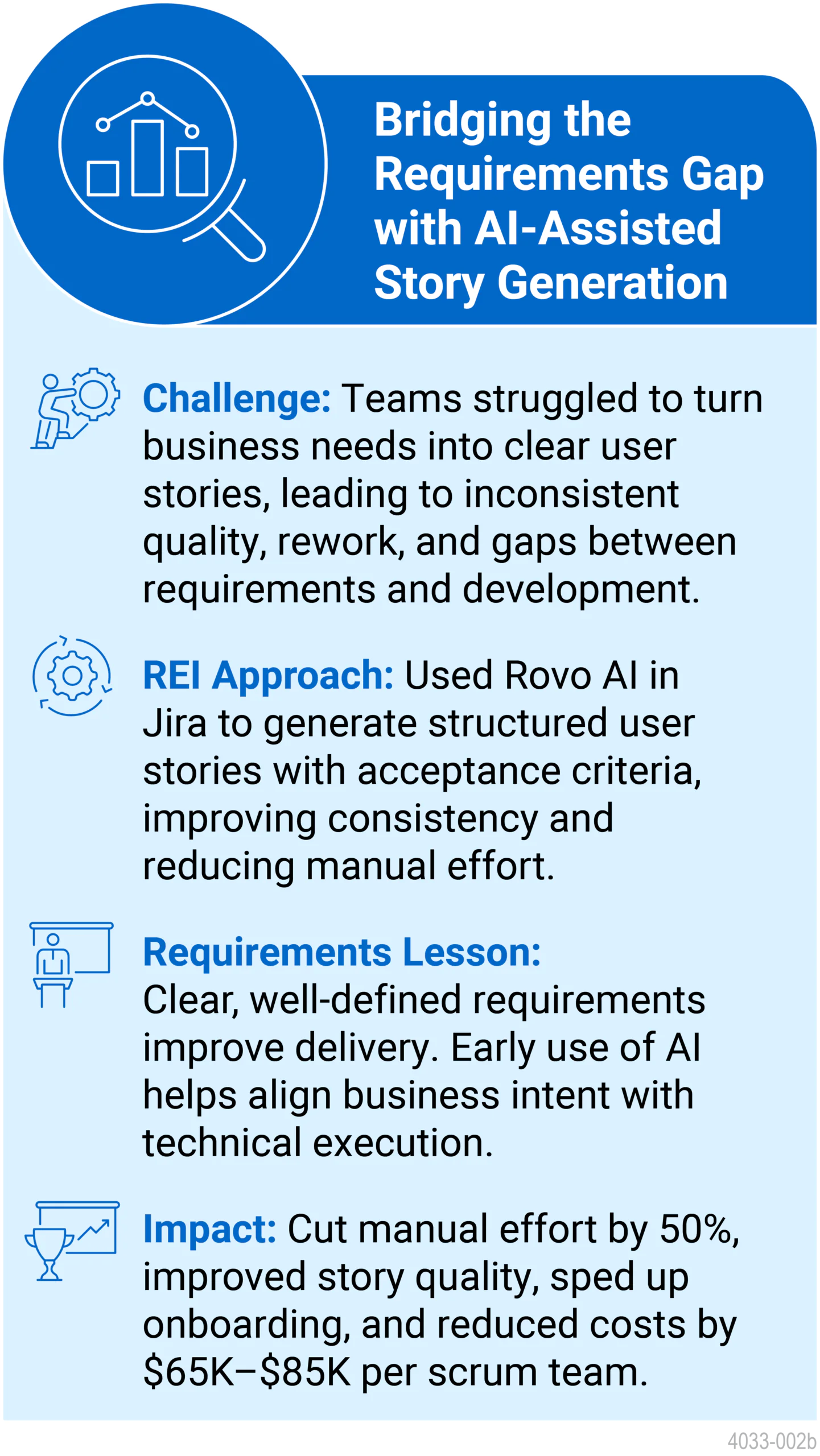

This is where AI becomes useful. Not as a replacement for analysts, product owners, business architects, or user researchers, but to accelerate sense-making. AI can help teams identify themes, cluster related issues, spot repeated narratives, summarize qualitative inputs, and surface likely areas of friction earlier in the process.

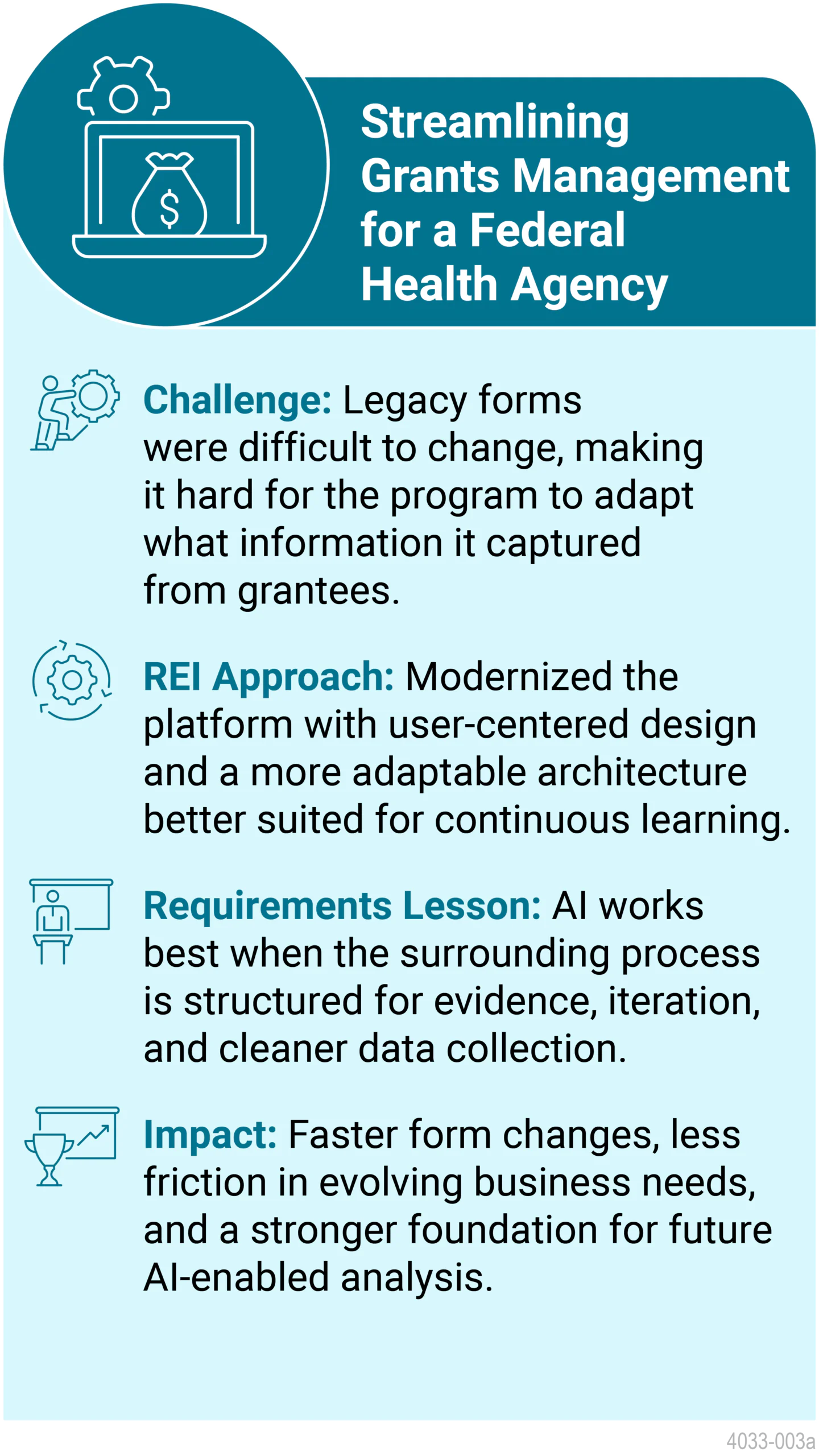

At REI Systems, that value only holds when the work remains grounded in governance and context. In federal environments, requirements decisions still need to be explainable, traceable, and aligned with mission outcomes. AI is most effective when it strengthens how teams work with evidence, rather than replacing the judgment behind those decisions. AI can make evidence easier to work with, but it does not remove the need for accountability.

What This Looks Like in Practice at REI

Across programs, AI-supported techniques can strengthen requirements work in several ways. Operational data, such as help desk records, can reveal recurring user confusion that points back to unclear requirements. Survey comments and interview notes can be synthesized more quickly to identify what users are struggling to understand. Usage analytics can show where people abandon workflows or spend too long on forms, helping teams test whether a requirement assumes knowledge the user does not actually have.

Just as important, these techniques help teams separate symptoms from causes. A recurring support issue may appear to be a product defect, but closer analysis often reveals that the real problem began earlier in the requirements phase. Framed this way, AI strengthens requirements gathering by helping teams move from anecdote to evidence more quickly.

Case Studies:

Using AI Responsibly

AI outputs can sound confident even when source data is incomplete, duplicated, or poorly contextualized. That risk is especially important in requirements gathering, where teams may be tempted to treat a generated summary as a complete picture. Responsible use starts with basic guardrails: validate source data, remove duplicates, cross-check qualitative themes against quantitative evidence where possible, and document how AI-informed decisions were made.

This also means starting small. Focused pilots are often the best way to test where AI genuinely helps and where it needs stronger human review. The goal is not to hand over requirements thinking to a model. It is to create a better decision-support process for the people accountable for the quality of requirements.

What Comes Next

Over time, AI will likely become a standard part of requirements work. It will help teams review larger bodies of evidence, maintain traceability across changing requirements, generate first-pass workflow models, and highlight downstream impacts more quickly than manual review alone. But the core work will remain human: understanding intent, negotiating tradeoffs, and deciding what matters most for mission delivery and user success.

At REI Systems, this reflects a broader approach to responsible AI and customer experience, where AI strengthens how teams understand user needs while keeping decisions transparent, traceable, and aligned with mission outcomes.

In practice, this is where AI-led requirements gathering delivers the most value. It gives teams earlier visibility into user needs, supports more informed decisions as requirements take shape, and helps reduce the risk of carrying flawed assumptions into delivery. The result is not just better requirements, but better outcomes for the people who rely on these systems every day.